How ageing networks, volatile supply, surging demand, and a new generation of technology are redesigning the grid.

The electrical grid is, by any measure, the largest machine ever built. For most of the twentieth century it operated on the simple premise of large, centralised generators pushing electrons in one direction, down high-voltage transmission lines, through substations, and into homes and businesses. That premise held remarkably well for decades. But as renewable penetration has picked up and demand patterns started shifting, cracks over the last decade have shown.

Three forces are happening at once:

- Ageing infrastructure: across the United States, more than 70% of power transformers are over 25 years old and 70% of transmission lines have exceeded their intended design life. In Europe,

- nearly 40% of transmission infrastructure is over 40 years old.

- The energy transition: the grid’s original design logic has been inverted. Instead of a few hundred large generators feeding power downward, the system must now accommodate millions of distributed, intermittent, and bidirectional sources, from rooftop solar to offshore wind.

- Explosive demand growth: AI, data centres, and the electrification of transport and heating are pulling electricity demand sharply upward, catching most forecasters off guard.

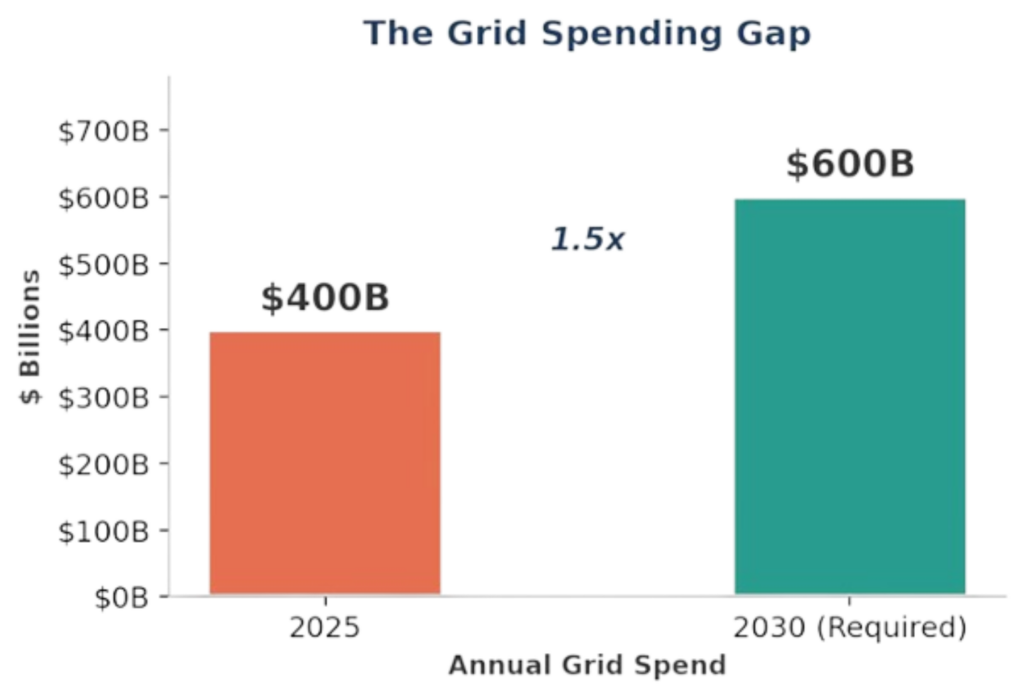

The grid spending gap

Global spending on electricity grids currently sits at roughly $400 billion a year. But the IEA says this needs to increase to over $600 billion by 2030, just to keep pace with national targets. This 1.5x increase in annual spend needs to materialise within five years, but given that grid projects take a decade or more to build, most of this increase will need to go into technology and process improvements, not just new steel in the ground.

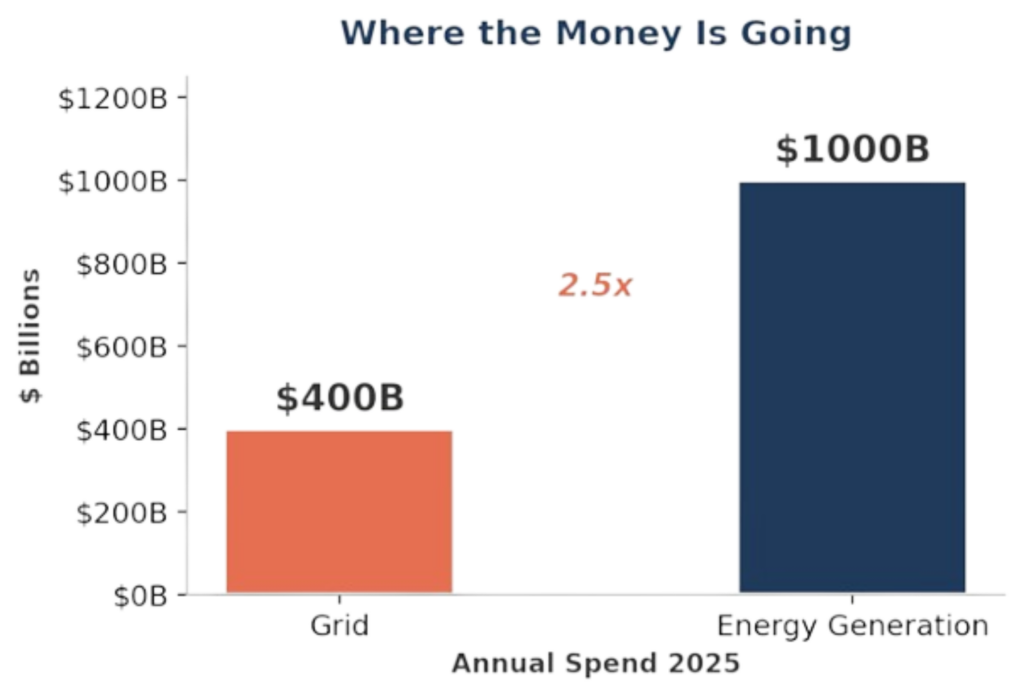

The picture gets worse when you look at where investment is flowing. Generation investment (principally renewables) has surged past $1 trillion a year, roughly 2.5x what is going into the grid infrastructure needed to deliver that power.

Governments, utilities and independent developers are building supply far faster than the wires to carry it. If this imbalance persists, the energy transition can stall.

Meeting national targets requires adding or refurbishing over 80 million kilometres of grid infrastructure by 2040, the equivalent of rebuilding the entire existing global network. Yet, the components needed (high-voltage transformers, subsea cables, switchgear) face supply chains already stretched thin, with lead times for large power transformers extending to three years or more.

The consequence of underinvestment is already measurable today in the form of connection queues. In the United States, more than 2,600 GW of proposed generation and storage capacity is waiting for grid interconnection, more than twice the country’s current installed capacity. Across Europe, over 1,700 GW of renewable and hybrid projects are stuck in connection queues. In 2024 alone, the cost of curtailing renewable output to keep European grids stable reached €8.9 billion, with 72 TWh of clean power effectively wasted because the wires could not carry it.

Every gigawatt of solar, every battery storage project, every EV charging network, and every data centre expansion is ultimately constrained by the same question: can the grid get the power there?

The AI Demand Shock

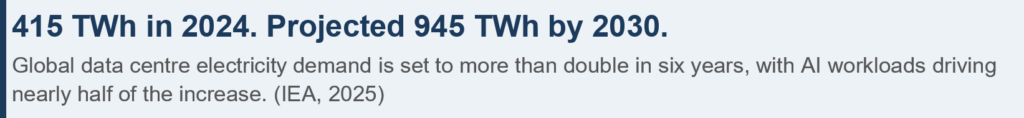

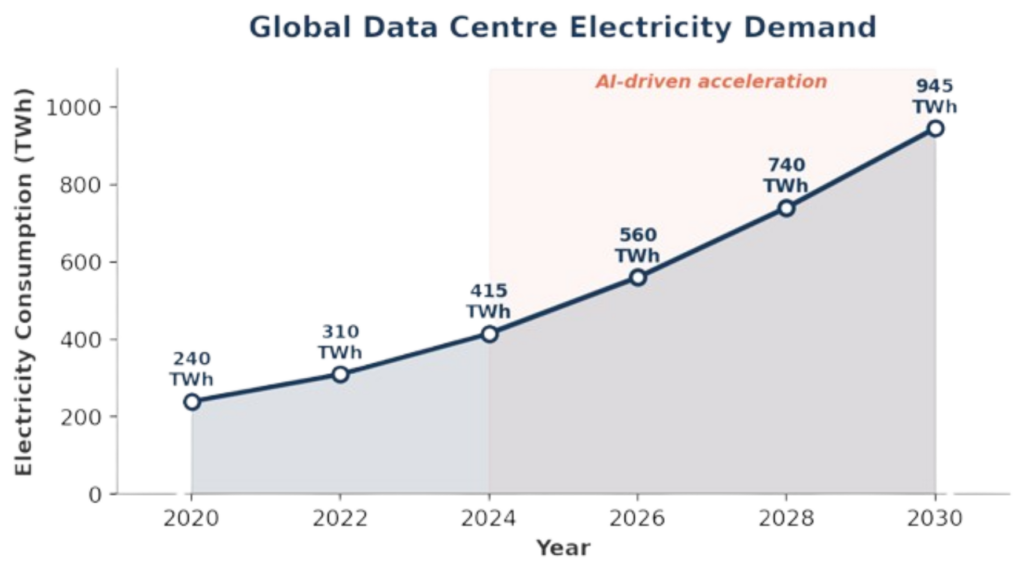

If the energy transition has been a slow-building pressure on grid capacity, the rise of artificial intelligence has been a sudden one. Global electricity consumption from data centres reached approximately 415 TWh in 2024, roughly 1.5% of total global demand. Under the IEA’s base case, that figure is projected to more than double to around 945 TWh by 2030, with AI-driven workloads growing at 30% annually and accounting for nearly half of the net increase.

The concentration of this demand is what makes it particularly challenging. In the United States, data centres are expected to consume 260 TWh in 2026, representing 6% of total national electricity consumption. In northern Virginia, home to the world’s densest cluster of data centres, demand has already outpaced local grid capacity. Projections suggest that data centre load could increase average electricity bills by 8% nationally and by more than 25% in the highest-demand markets by 2030. More power plants will not fix this, as the bottleneck is transmission, not generation. Demand is growing on an exponential curve, while the grid is being built out on a linear one.

Regulatory responses: clearing the queue

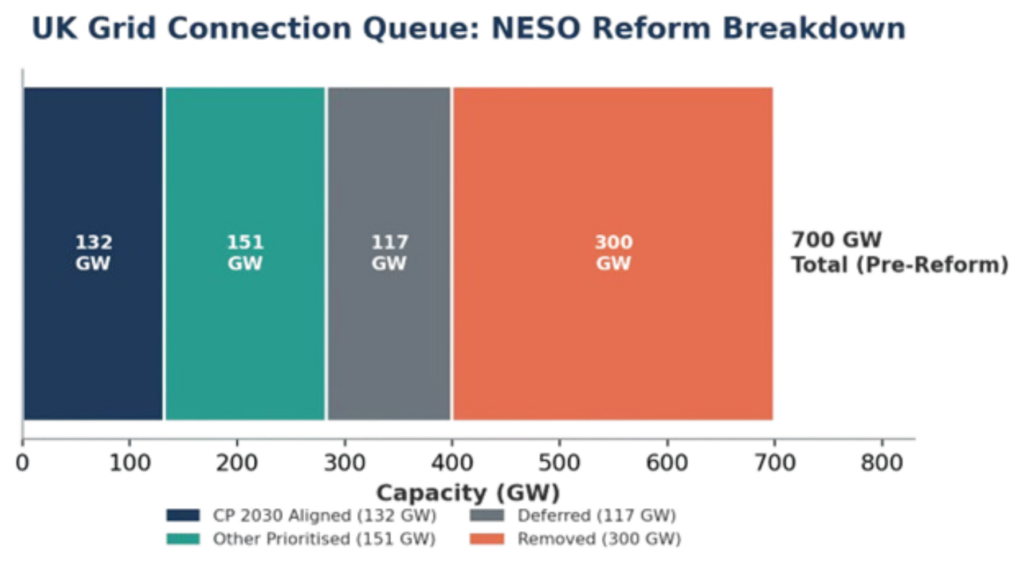

In the United Kingdom, the National Energy System Operator (NESO) completed the most significant reform of the grid connections process in December 2025, replacing a first-come, first-served model that had allowed the queue to grow tenfold in five years to over 700 GW (roughly four times what the country requires). Under the reformed process, 283 GW of projects have been prioritised, with 132 GW aligned to the government’s Clean Power 2030 target. More than 300 GW of speculative projects were removed. Ofgem’s accompanying five-year settlement aims to unlock up to £90 billion of transmission investment.

In the United States, FERC’s updated interconnection rules are attempting to streamline a process where median time from request to commercial operation has doubled: from under two years for projects built in 2000 to 2007, to over four years for those completed in 2018 to 2024. Only 13% of capacity that submitted interconnection requests from 2000 to 2019 had reached commercial operation by end of 2024; 77% had been withdrawn entirely.

Useful as these reforms are, they address the queue, not the underlying physics. Faster permitting helps. But what the grid really needs is technology that can squeeze more capacity out of the infrastructure that already exists.

The technology layer

What tends to get lost in the energy transition debate is how much latent capacity the existing grid already has. The US Department of Energy estimates that up to 50% more capacity can be squeezed from existing transmission lines, if you have the technology to access it.

A category of solutions known as grid-enhancing technologies (GETs) has emerged to address the gap between what the grid was designed to carry and what it is now being asked to deliver.

- Dynamic line rating (DLR): uses real-time environmental data to calculate actual thermal capacity of a line at any given moment. The concept dates to the 1990s (with early prototyping in 1997), but deployment has accelerated sharply as sensor costs have fallen and grid congestion has worsened. Most lines are rated conservatively; DLR typically unlocks 20 to 40% more capacity under real conditions.

- Advanced power flow control (APFC): uses modular power electronics to redirect electricity away from congested paths and onto underutilised lines, allowing operators to treat the network as a more flexible, software-defined system.

- Topology optimisation: applies AI and machine learning to reconfigure switching patterns in real time, routing power along the most efficient paths. Purely software-based, it unlocks additional capacity at minimal marginal cost.

These buckets capture the full stack of current and emerging technologies, from hardware sensing, actuation and software intelligence. While other categories could include grid-scale storage or advanced conductors, these are adjacent infrastructure investments, rather than technologies that enhance the capacity of existing lines. The three-category framework isolates the core value of GETs, making the current grid work more efficiently, without pouring concrete or stringing new wire. The DOE backs this up, estimating that GETs can unlock up to 50% more capacity from existing transmission infrastructure, and that virtual power plants and other advanced technologies could each expand US grid capacity by 20 to 100 GW. None of this is speculative, as these technologies are commercially available, deployable in months rather than decades, and among the highest-return levers grid operators have.

Virtual power plants and the decentralised grid

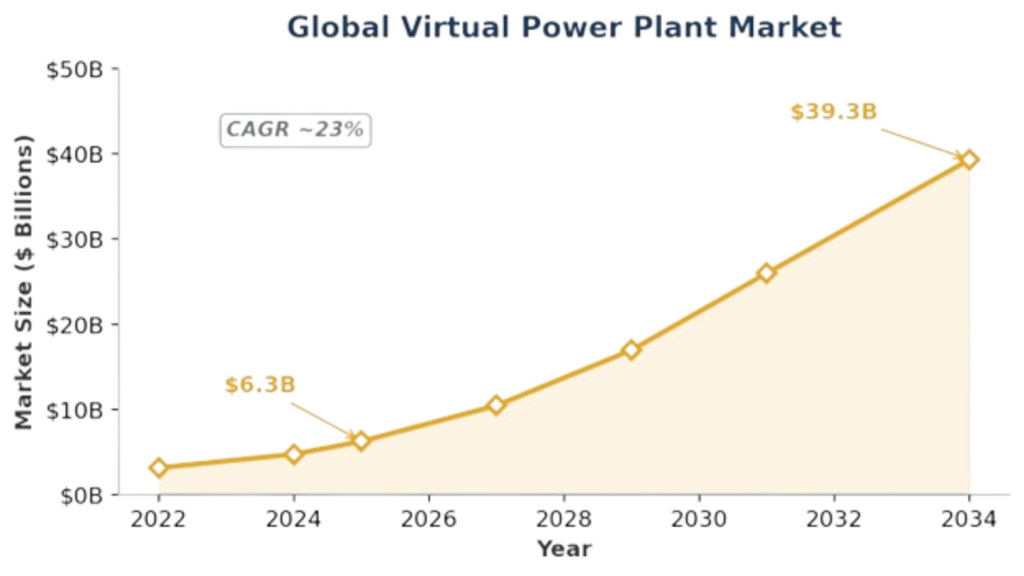

The concept of the virtual power plant, aggregating thousands of distributed energy resources via software so they behave like a single dispatchable generator, goes back to 1997, but for years the idea has stayed mostly theoretical. What has changed is the cost curve, from cheap sensors and cheap batteries to real-time communications, making coordinated dispatch viable at scale. The global VPP market is now valued at illion by 2034, a CAGR of nearly 23%.

VPP development runs 40 to 60% cheaper than comparable conventional generation, responds faster (the resources are already connected), and provides the flexibility grids need to manage renewable variability. When NRG Energy paid $12 billion for LS Power’s commercial and industrial VPP platform in 2025, it was a clear signal: incumbents now treat distributed flexibility as core infrastructure, not a niche experiment.

What makes VPPs especially interesting is their convergence with grid-edge AI. Machine learning models sitting at the distribution edge can forecast local load, optimise battery dispatch, and coordinate thousands of assets in milliseconds. Tesla’s Autobidder, Sunrun’s aggregation platform, and a growing cohort of startups (Voltus, AutoGrid, Stem) are already doing this at commercial scale. This has a coumpound effect: as more distributed resources come online and more data flows through dispatch algorithms, accuracy improves and the economic case for further decentralisation gets stronger. Each new asset connected makes the platform more valuable for every other participant.

Grid-scale storage: the buffer the grid needs

As recently as 2015, grid-scale batteries were demonstration projects. By 2020, a 90% drop in lithium-ion costs since 2010 and the rapid build-out of solar had created a straightforward economic case of storing the midday surplus, then sell it at evening peak. Five years on, storage has become a design requirement, and new grids are being planned around it.

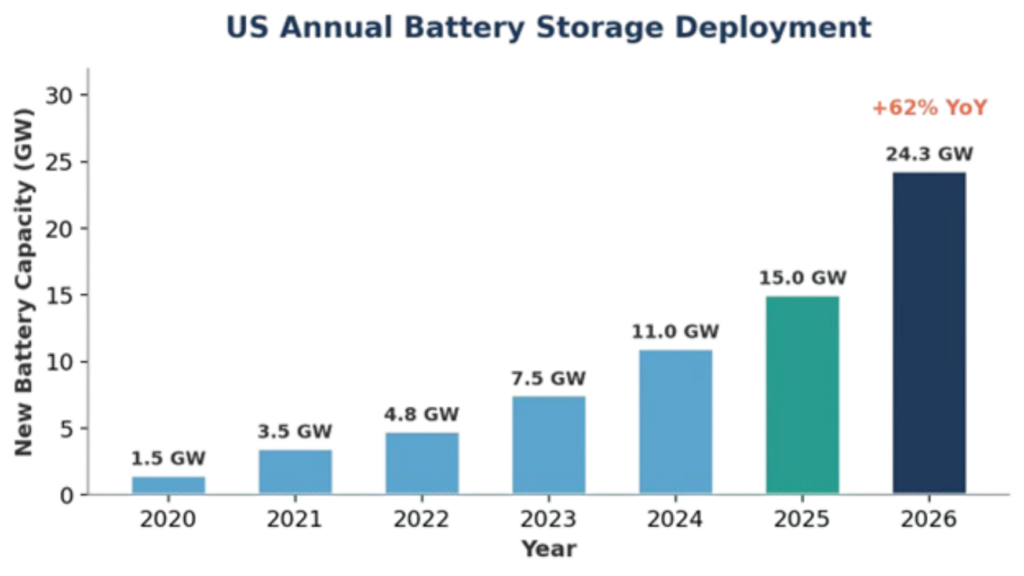

The global grid-scale battery market was valued at approximately $15.8 billion in 2025 and is projected to grow to $44 billion by 2034 (CAGR ~11%). In the United States alone, 24.3 GW of new battery storage capacity is expected to come online in 2026, surpassing the 15 GW record set the previous year.

The drivers are structural:

- Renewable intermittency: As renewable penetration rises, the gap between when energy is generated and when it is consumed widens, particularly in solar-heavy markets where the afternoon surplus must be stored for the evening peak.

- Ancillary services: batteries provide frequency regulation, voltage support, and congestion relief that were historically the domain of gas peakers.

- Transmission deferral: utilities now account for nearly 56% of grid-scale storage deployment, using batteries to defer expensive transmission and distribution investments.[21]

- New chemistries: from iron-air batteries capable of multi-day duration storage to sodium-ion cells that bypass the lithium supply chain entirely, next-generation chemistries are moving from lab to pilot deployment.

Capital movement: The acqui-spike

Power sector M&A hit $142 billion in 2025, nearly double the prior year.

- In December 2025, Alphabet paid $4.75 billion to acquire Intersect Power, a data centre and energy infrastructure developer, gaining several gigawatts of generation and development pipeline in one move.

- Blackstone’s infrastructure division announced an $11.5 billion deal to acquire TXNM Energy, a utility serving 800,000 customers across New Mexico and Texas.

- Constellation Energy paid $26.6 billion for Calpine, creating a 55 GW fleet spanning nuclear, gas, and geothermal, signalling that securing dispatchable generation capacity is now a strategic imperative.

The InfraTech opportunity: capital follows the constraint

Venture capital has been taking notice. In 2024, grid infrastructure technologies attracted $6.4 billion in VC funding, more than any other clean energy technology segment.

The pattern of capital deployment is instructive.

- Generation 1 (Monitoring & Analytics): sensors, dashboards, and data platforms that helped utilities understand their networks.

- Generation 2 (Active Control): technologies that intervene in grid operation, dynamically rerouting power, managing distributed resources, and optimising storage dispatch in real time.

- Generation 3 (Autonomous Grid Management): AI systems making operational decisions at a speed and granularity that human operators cannot match.

Solving the grid’s technology problem will not come from a single category, instead the companies that will define the next era of infrastructure will be a combination of grid enhancing solutions that unlock latent capacity from existing lines and smarter policies that clear connection queues and incentivises deployment. From battery storage and VPPs that add flexibility, to software intelligence layers that enable the existing grid to perform like a network twice its size. Innovative solutions are already paving the way, from the likes of Piclo (energy flexibility marketplace), LineVision (dynamic line rating), Smart Wires (power flow control), Voltus and AutoGrid (virtual power plants, acquired by Uplight in 2023), and Stem (AI-optimised storage).

In March 2026, Tesla, Google, and Carrier launched the Utilize Coalition, agreeing that the US grid operates at just 53% of its total capacity on average and that better utilisation could save consumers over $100 billion in the next decade. Demand is growing structurally, the physical grid is constrained, and the technology to bridge the gap (like DLR hardware and AI-driven dispatch) is proven but barely deployed. The investable surface area across these domains will see urgent and relatively enduring tailwinds, supported by long-term contracts and creditworthy counterparties. This is where the returns in InfraTech will concentrate.